A team of faculty and PhD students from Gallaudet University traveled to Barcelona, Spain, this April to participate in the ACM CHI Conference on Human Factors in Computing Systems (CHI 2026), the world’s premier international gathering for human-computer interaction research. Held April 13-17 at the Centre de Convencions Internacional de Barcelona, CHI drew 5,300 attendees from across the globe, and this year’s Gallaudet contingent made their presence felt with an award-winning paper, a poster, an additional full paper, and two co-organized workshops centering deaf and hard of hearing perspectives in technology design.

The delegation included Drs. Christian Vogler, Raja Kushalnagar, and Abraham Glasser, along with PhD students Shuxu Huffman, ’16, and Michaela Okosi. Their contributions spanned the full arc of the conference, beginning on opening day and running through its final sessions.

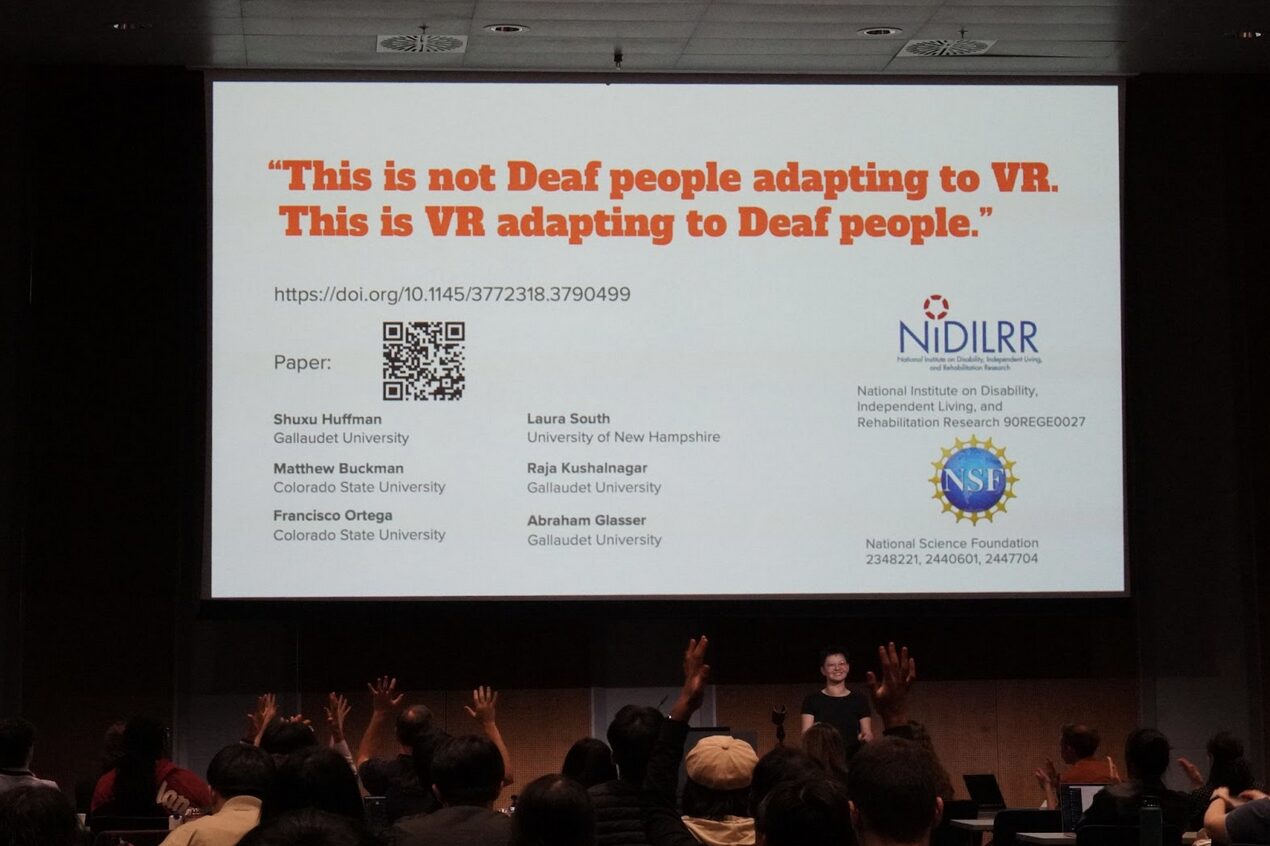

An Honorable Mention for Deaf-centered VR research

One of the week’s highlights came on Wednesday, April 15, when Shuxu Huffman presented the paper “Reclaiming VR Design Authority: Deaf Signers Shaping Immersive Classrooms,” with Dr. Raja Kushalnagar serving as Principal Investigator and advisor. The paper received an Honorable Mention, an award given to only the top 5% of submissions at CHI, recognizing work that the program committee identified as especially impactful and rigorous. The research examines how deaf signers can take the lead in shaping the design of virtual reality learning environments, moving beyond retrofitted accessibility toward experiences built from deaf perspectives from the ground up.

Two days earlier, on Monday, April 13, Huffman also presented a poster titled “‘We need a vision first’: Speculating Deaf-Centered Immersive Classrooms,” again with Dr. Kushalnagar as PI and advisor. The poster extended the conversation around what future immersive classrooms could look like when deaf community members are positioned as visionaries rather than end-users of someone else’s design choices.

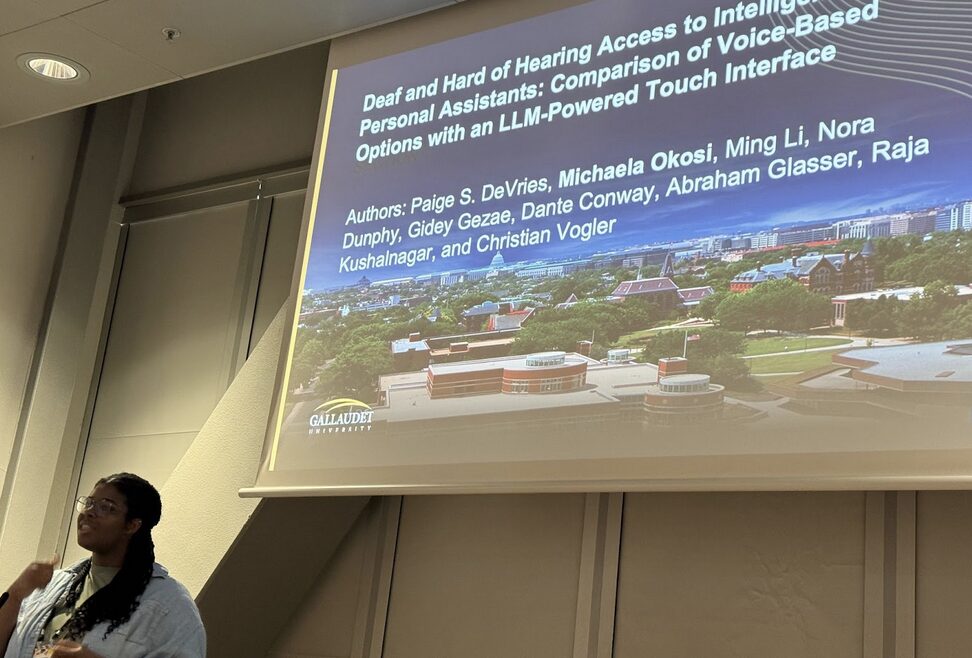

Rethinking voice assistants through an LLM-powered touch interface

On Thursday, April 16, Michaela Okosi presented the paper “Deaf and Hard of Hearing Access to Intelligent Personal Assistants: Comparison of Voice-Based Options with an LLM-Powered Touch Interface,” with Dr. Christian Vogler as Principal Investigator and Dr. Abraham Glasser as advisor. The work engages with a pressing question as voice assistants become increasingly embedded in everyday life: what happens to deaf and hard of hearing users who are often locked out of voice-first interaction paradigms? The paper compares conventional voice-based intelligent personal assistants against a touch-based alternative powered by a large language model, offering evidence for how interface modality shapes access and usability for deaf and hard of hearing users.

Two co-organized workshops on Deaf technologies and speech AI

Beyond their individual papers and posters, the Gallaudet team co-organized two workshops that drew together researchers working at the intersection of accessibility, AI, and deaf-centered design.

The first, “Sign-Up on Deaf Technologies: Reframing Access, Interaction, and Design,” took place on Tuesday, April 14. The workshop pushed participants to move past framings of accessibility as an accommodation tacked onto mainstream technologies, and instead to reframe access, interaction, and design around deaf communities as primary users and collaborators.

The second workshop, “Speech AI for All: The What, How, and Who of Measurement,” was held on Thursday, April 16. As speech AI systems proliferate, how these systems are evaluated and who they are evaluated for has become a central question for equitable AI development. The workshop brought together participants to interrogate what gets measured, how measurement is conducted, and whose needs are represented in benchmarks and evaluation frameworks for speech AI.

A strong showing for Deaf-led HCI research

Taken together, the Gallaudet team’s contributions at CHI 2026 reflect a sustained research agenda that positions deaf and hard of hearing communities not as recipients of accessibility features but as leaders in shaping the technologies that affect their lives — from VR classrooms and personal assistants to the speech AI systems being rolled out across consumer and enterprise products. The Honorable Mention for Huffman’s VR paper, alongside the breadth of papers, posters, and workshops the team delivered over the course of the week, signals the growing visibility of deaf-centered HCI research within the broader CHI community.